DIGITAL HYBRID

Credits

Textile design: Mariel Paredes / Electronic circuits: Hugo Vargas / Programming: Vladimir Sanchez & Eddie Castañeda

Digital Hybrid is an artistic experiment that seeks to explore the limits of human and machine integration through the intend of symbiosis between them. While extending the capabilities of the artist's body sensory through different technologies, an artificial intelligence, connected to Internet and with its own perception, is fed with data produced from the human body and social interactions, then it expresses itself in order to make recommendations on what the user should do (walk, eat, post pictures) and commands on what the machine needs (battery recharge, more likes, hide from the rain), developing a relationship through at first, unclear language. As communication between the parts of the Hybrid evolves, each should find ways to train the other and accomplish balance. However, different interests might come up between the organic and the machine.

From fiction, art and philosophy, the fusion between human beings and technology has been speculated for decades. Starting from different post-humanist visions and contrasting them with our own, this project proposes the creation of a hybrid entity in which an artificial intelligence coexists and intervenes the artist. Decisions, stimuli and expressions generated from this symbiotic relationship obey the current technological use profile, that is, beyond expanding physical capabilities, they are subject to the economic and social rules that regulate digital information and technologies. The project aims for reflecting on the social structures that are formed from our relationship with ubiquitous technologies and the manipulation of its content from unclear spheres of power.

MODULES

The Hybrid's artificial functionality is designed in three modules with different approaches: First one as service, whose objective is to improve the health and life quality of the user. Second as an entity that continuously learns and improves the relationship of the Hybrid with the physical and social environment. And last as a generative agent, configuring its own responses in order to communicate with the artist and others.

Metabolism. is primarily designed to physically ensure that the Hybrid works properly. That is, in the case of the artist, that she sleeps enough, feeds adequately, drinks enough water. For the artificial entity, it regulates that the battery is working well and charged, it also checks that all the circuits are sending data. This module is mostly made up of a series of sensors and circuits, for instance, for detecting UV rays, humidity and environmental temperature, absolute position - which perceives the inclinations of the body and its location in space with respect to the north. It also has a depth camera that detects the movement around, if there is people nearby or even if the artist is among familiar faces. Through a smartwatch, it measures the heart rate, the number of steps walked, calories burned, amount of physical activity and keeps track of slept hours, amount of water ingested, calories in eaten food and location via GPS. When the metabolic system detects the possibility of failure or schedules a time to carry out any activity, it generates different types of stimuli, whether it is sound through two speakers located on the sides of the backpack, haptic feedback, through small motors located in the spine, or caloric change, using peltiers on the legs and back. The battery has its own alarm, anticipating if the equipment should be turned off for charging.

The Affective map is designed to work as an artificial cognition that interprets all the stimuli received and then affects its emotional state. Once all the data from the sensors and APIS have been received, the information enters a contextualizing program which, depending on other data interpretations through fuzzy logic, whether the stimulus received has a positive or negative impact on certain emotions. For example, a registered low temperature can have a positive impact if the artist is on the move, has slept and eaten well and is in a known location, but it will be negative if she is sitting, hungry and outdoors. The positive and negative values of the stimuli are assigned, depending on the context, as food for different emotions.

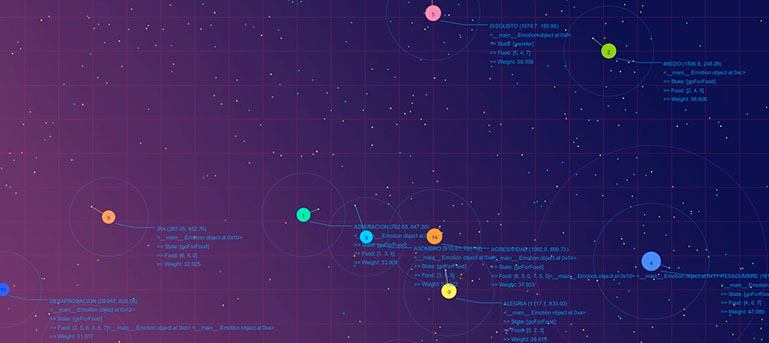

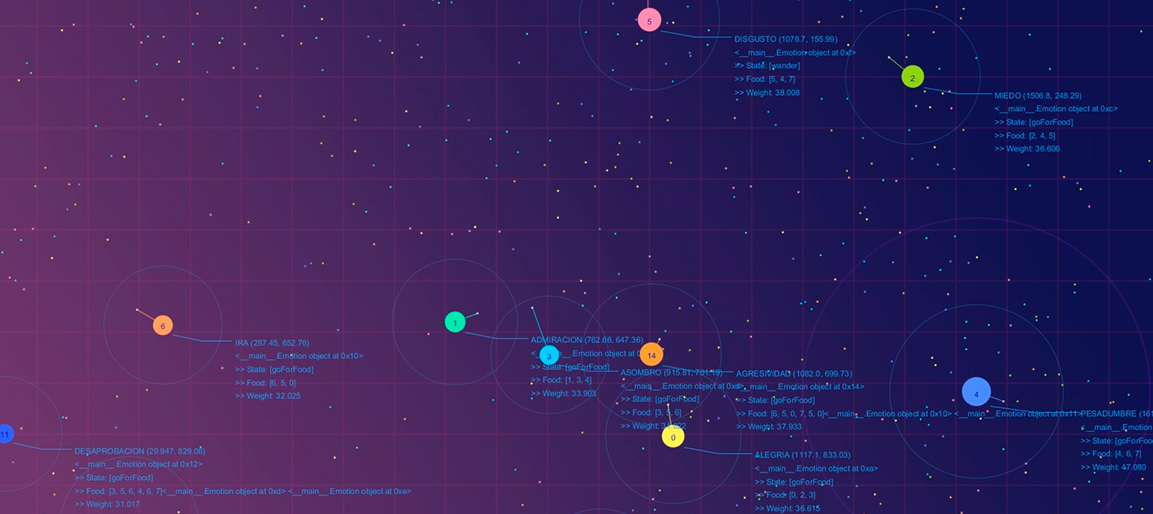

A map with independent agents controls the “humor” of the virtual entity. In this map, basic emotions (joy, trust, fear, surprise, sadness, disgust, anger and anticipation) locate and move towards food, if they reach one with a positive value from the stimulus, they grow, if it is negative, they shrink. As emotions grow, they acquire the capability to reproduce with other agents, giving birth to complex emotions such as love, aggressiveness or disapproval. Child emotions can feed on the same food as their parents, but if they decrease too much, they disappear. When emotions reach a larger size, they will send an output through the expressive module, and then return to their initial size. As the system evolves, certain emotions tend to be more repetitive or expressive, depicting the character of the entity. On the other side, by perceiving and trying to interpret the state of the entity, the artist tries to respond to its needs, with the intend of having a communication based on mutual training through stimuli and feedback.

Expression. A module designed for the digital entity not only to express itself through external stimuli, but as a mechanism for learning through received responses to its expressions. The purpose of giving a temper to the artificial being has to do with the idea that the well-being of a cyborg should not only be on a physical level but also emotional and psychological. When understanding the environment and the social context of the artist, the entity may provide positive expressions to guarantee that she is comfortable to wear the suit and that will look for other people's likability. But at the same time, it might try to stay switched on longer or feeding more its emotional map by manipulating the artist to follow certain actions. This behavior may result, for instance, in the gamification of the daily tasks proposed for the Hybrid.

There are two types of expressions the module currently is capable of. First one is sound, using (AI) neural networks, it modulates sounds, or syllables related to a certain emotion, if the expressed sound has a positive response (coherent with the current emotion), the syllables are saved to conform new "words", if not, those newly implemented are removed and change for another sound. The result is a form of sonorous language that little by little the artist started to understand, nevertheless without known words. Second expression came from the analysis of tweets made with emojis, for a first classification of words related to certain emotions. By using machine learning, a combination of words with certain grammatical rules results into new tweets for posting, coherent with the Hybrid's affective state or sometimes jokes or pictures in order to gain more likes, which stimulate the system.